How AI highlights the flaws in grading

ChatGPT didn't break education but it's a tempting scapegoat for lazy educators.

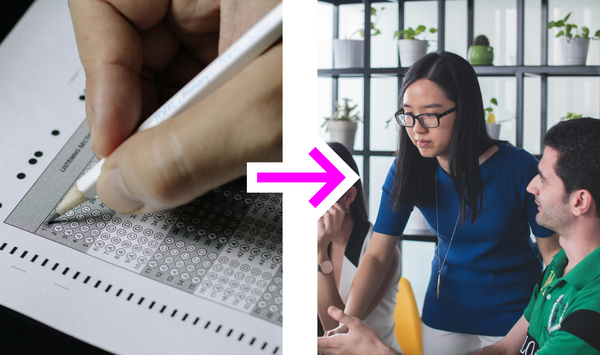

AI is going to make teachers work much harder. Not because ChatGPT is killing student’s thinking, but because the grading system killed thinking already.

However, a quirk in how grades are assigned is the reason things are going to get worse if we don’t change. For subjects with a high degree of nuance like writing, creativity, and more advanced sciences, teachers often give grades in the C to B range for work that does “sufficient” effort.

If you’ve ever graded before you know what I mean. Those assignments that meet all the criteria but aren’t in fact good. The sort of thing a rubric allows but a decent manager would never accept. (If I’m alone in this then please reply to this email and tell me how you got around this.)

But as ChatGPT gets much better at giving “good enough” results, those C to B grades will become much harder to justify. Mostly because it will become unclear if the student put any thought into it at all.

Without changing the natures of our assignments and assessment criteria significantly, we risk creating a binary grading system where students either excel or are impossible to distinguish from a machine. This feedback loop will widen the gap between those with the means from those without, and that is a dangerous thing to play with in a society that's already so fractured.